Edge AI in Action: Mastering On-Device Inference

During this tutorial, we will present how to develop and deploy models for edge AI using as examples object detection and large language model applications.

Summary

Edge AI deploys artificial intelligence models directly on devices such as smartphones, cameras, sensors, drones, and wearables, allowing them to perform inference locally without relying on the cloud. This approach delivers key advantages, including lower latency, improved privacy, faster responsiveness, and greater energy efficiency.

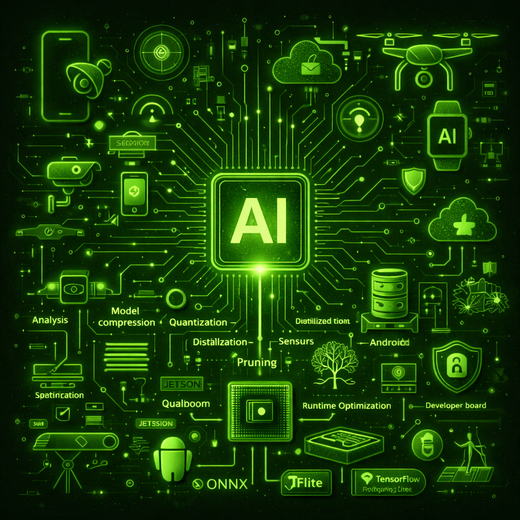

However, running AI models on edge devices requires specialized tools for optimizing model performance, efficiency, and latency. While general-purpose frameworks offer broad compatibility, unlocking the full potential of hardware accelerators, especially those from Qualcomm and NVIDIA, requires a deeper understanding of platform-specific SDKs and engines.

In this CVPR 2026 tutorial, we will present a hands-on, practice-oriented guide to designing, optimizing, and deploying deep learning models on two of the most prominent edge AI platforms: Qualcomm Snapdragon and NVIDIA Jetson. With a focus on computer vision, we will explore real-world applications such as object detection and large language models.

We will showcase the use of leading tools and frameworks—including ONNX, TensorRT, Qualcomm SNPE, Qualcomm AI Runtime SDK, and NVIDIA's AI Stack across diverse hardware platforms such as Jabra PanaCast cameras, Qualcomm development boards, Android smartphones, and NVIDIA Jetson AGX Thor. Participants will gain practical insights into the full edge AI pipeline, from model design to real-time deployment.

Topics

We designed this CVPR 2026 tutorial for researchers, engineers, and practitioners seeking to bring AI capabilities to the edge. While prior experience with edge deployment is not required, attendees should have a foundational understanding of computer vision, image analysis, and deep learning. The session will provide both conceptual insights and practical demonstrations, with a strong emphasis on real-world scenarios and hands-on examples. We structured the tutorial into four key modules:

- Introduction to Edge AI Platforms and Hardware Acceleration: Explore the motivation, core principles, and challenges of running AI at the edge. This module will compare edge AI to cloud AI, examine the trade-offs in latency, privacy, power consumption, and performance. Real-world applications—ranging from mobile devices to collaborative cameras—illustrated the growing relevance and impact of edge AI systems.

- Optimizing and Deploying on Qualcomm Snapdragon: Learn the technical foundations for deploying AI models across Qualcomm-based edge hardware. This module will cover model conversion pipelines, inference optimization, and benchmarking across platforms. We will demonstrate the use of Qualcomm SNPE and Qualcomm AI Runtime SDK to enable fast and efficient deployment of deep learning models on resource-constrained devices.

- Edge AI Model Performance Analysis and Benchmarking: Analyze and evaluate the performance of AI models on Qualcomm-based edge hardware. This module focuses on on-device profiling and execution using Qualcomm’s QNN runtime, enabling systematic benchmarking across different hardware backends (HTP, DSP, GPU, CPU). It emphasizes performance analysis through runtime metrics, quantization evaluation, and analytics dashboards, providing insights into model behavior, efficiency, and accuracy to support informed optimization and reliable deployment on resource-constrained devices.

- Optimizing and Deploying on NVIDIA Jetson: Discover how to leverage NVIDIA Jetson platforms for rapid prototyping and deployment. This module will present the ecosystem of pre-optimized AI models and SDKs for Jetson platforms, with a focus on real-time computer vision tasks. We will walk through tools for model quantization, runtime selection (CPU, GPU, TensorRT), and on-device benchmarking using the TensorRT SDK and the NVIDIA AI Stack.

Schedule

This is our tutorial schedule. After our presentation, we will publish the slides presented during the tutorial in this section.

TBD - Opening by Fabricio Batista Narcizo

TBD - Introduction to Edge AI Platforms and Hardware Acceleration by Elizabete Munzlinger

TBD - Optimizing and Deploying on Qualcomm Snapdragon by Fabricio Batista Narcizo and Shan Ahmed Shaffi

TBD - Edge AI Model Performance Analysis and Benchmarking by Zaheer Ahmed

TBD - Resource-Constrained VLM Deployment on Edge AI by Sai Narsi Reddy Donthi Reddy and Shan Ahmed Shaffi

TBD - Closing Remarks and Joint Q&A

Supporting Materials

This section will provide additional resources and materials related to our tutorial. You will find code examples, notebooks, trained models, and other relevant information to help you better understand and implement the concepts discussed during the tutorial.

Tutorial Video

Watch the video of our tutorial Edge AI in Action: Technologies and Applications presented during the 2025 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR 2025).

Organizers

The development of AI multimodal systems requires expertise across diverse fields, including computer vision, natural language processing, human-computer interaction, signal processing, and machine learning. In this tutorial, we aim to provide both breadth and depth in multimodal interaction research and applications, offering a comprehensive and interdisciplinary perspective. Our primary objective is to inspire the CVPR community into these areas and contribute to their dynamic and rapidly evolving nature.

Fabricio Batista Narcizo

Senior AI Research Scientist

Jabra / IT University of Copenhagen (ITU)

Elizabete Munzlinger

Industrial Ph.D. Candidate

Jabra / IT University of Copenhagen (ITU)

Sai Narsi Reddy Donthi Reddy

Senior AI/ML Researcher

Jabra

Shan Ahmed Shaffi

AI and ML Researcher

Jabra

Zaheer Ahmed

Computer Vision Engineer

Jabra